The pivotal transition to Google Analytics 4 (GA4) is coming this year, and while there has been much work done to educate on new data streams, how to build events, and learn the tricks of a new interface, there is more to come to prepare for the switch to GA4. GA4 is not entirely comparable to its predecessor, Universal, particularly when it comes to filtering data – it only allows property filtering by host domain. This renders much of the complex filtering previously in place, obsolete. Since we are all facing this predicament, it’s paramount that we proactively address the issue by investigating what is different about GA4 filtering and how to create parity with existing tools.

Before diving into the data’s intricacies, it is essential to remind ourselves of an important fact – Universal Google Analytics and Google Analytics 4 are distinct analytics solutions, and we should not expect the data to match precisely. However, we should be able to look at the two data sources and see similar trends in traffic and user activity.

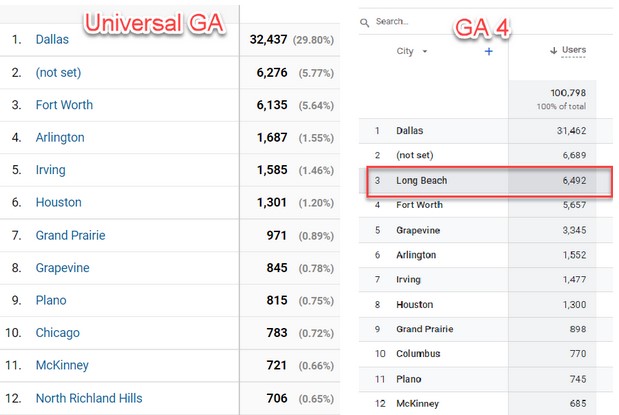

To start, let’s examine the city reporting in Google Analytics. Google uses a 3rd party location service to populate the locations in Google Analytics, and that location information is all based on IP address and considered an approximation of the actual location.

In the graphic above, we see the city report for a dealership located in Texas. What’s interesting to note is the influx of traffic coming from Long Beach, CA. This traffic comes from a website crawler that recently hit the site. It is not real traffic, and in Universal GA, it can be filtered out. In GA4, however, we’re unable to filter it out of the stream, so the user reporting in GA4 is now off by about 6,000 users. While filtering will always allow some traffic through, 6,000 is a significant number that can accumulate over time and ultimately impact all your website data.

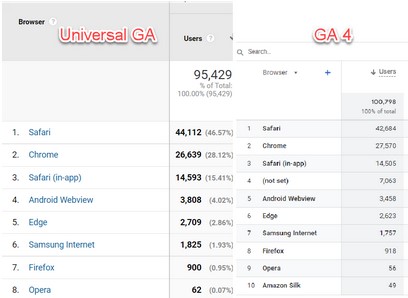

When examining the browser report to the right, we see the issue above reappear again. A browser of “not set” has historically been the sign of a bot or crawler visiting your website. If you have ever experienced “ghost spam” in your analytics, you know it’s a similar issue. Looking at this example, there are over 7,000 users with a “not set” browser, which is going to include the crawler traffic plus other traffic getting past the Google bots. Once again, over time not filtering will negatively impact all your reporting.

Are you wondering how to ensure your data is clean and accurate? Fear not, for the Google Whisperer is here with some ideas. If you are using Big Query or another similar tool to store your data, you can apply filters to exclude any data from a table after the fact. To ensure maximum transparency, you should keep detailed notes of the data that was filtered out and when it was done. Similarly, if you are using Looker Studio for reporting, filters can be applied to the entire report to weed out any unwanted data.

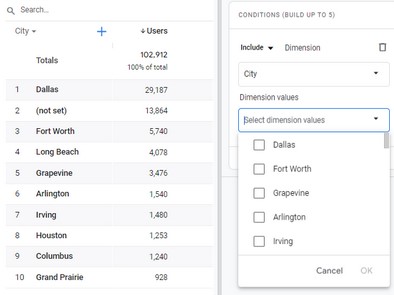

Google does allow for filtering on reports in the GA4 interface, but the issue is that not all of the data shows up as filterable. This is demonstrated in the example to the left when you attempt to enter equals not-set.

Rest assured, Google is committed to addressing the filtering issue in GA4 and has said they are working on making it a priority for 2023. With the countless releases and updates made for GA4 since 2020, we can expect to see even more in the coming months, especially with the anticipated transition period to GA4 in July. While we wait for these updates, know that the Google Whisperer will be here to assist and provide solutions to any hiccups we may encounter. Together, we can ensure that our data is as clean and accurate as possible and make the most of the new opportunities presented by GA4.